Two RAGs

I built FSS-RAG first. It does a lot. Then I built FSS Mini RAG. It does less — deliberately.

The second one taught me more about the first than building the first did.

What Full RAG Looks Like

FSS-RAG is a complete retrieval-augmented generation pipeline. It indexes documents, maintains a vector store, handles chunking strategies, manages embedding models, runs queries, reranks results, and feeds a language model with retrieved context.

It works well. It handles the complexity you need when the documents are large, the queries are varied, and the answers need to be accurate.

It also has a lot of moving parts. Which means when something goes wrong — and retrieval systems go wrong in interesting ways — the failure point isn't always obvious.

Why I Built a Smaller One

I kept wanting to test retrieval ideas quickly. Change a chunking strategy. Try a different similarity threshold. See how a different embedding model behaved on the same corpus.

In FSS-RAG, every change meant touching several interconnected pieces. The test cycle was slow.

FSS Mini RAG is a minimal implementation. It does the core thing — embed documents, store vectors, retrieve by similarity — with as little else as possible. No reranking. No complex chunking logic. No abstraction layers between you and the retrieval step.

It's a workbench, not a product.

What You Learn From Minimum Viable Retrieval

When you strip everything away, the retrieval problem becomes very clear.

Chunk size is not neutral.

This was the first thing the minimal system made obvious. In a full pipeline, chunking is a configuration option you set and mostly forget. In a minimal system, you feel it immediately.

Large chunks retrieve more context but dilute the relevance signal. A thousand-word chunk that contains one relevant sentence will score lower than a hundred-word chunk that's mostly relevant. The model is averaging similarity across everything in the chunk.

Small chunks have sharp relevance signals but lose context. A single sentence retrieved without its surrounding paragraph is often ambiguous. The answer is there but the reader can't interpret it.

The right chunk size depends entirely on how your documents are structured and what kinds of questions you're answering. There's no universal answer.

Retrieval count changes everything.

Retrieve one result: you'd better hope it's the right one.

Retrieve ten results: the right answer is almost certainly in there, but now you're feeding ten times as much context to the language model. Cost, latency, and prompt window all expand.

The minimum viable system forces you to choose. The full system lets you hide from the choice behind configuration defaults.

Similarity scores are relative, not absolute.

In the minimal system, I could watch scores directly. On a well-matched query against a focused corpus, the top result scores 0.88 and the second scores 0.71. Clear winner.

On a vague query against a large diverse corpus, the top result scores 0.61 and the second scores 0.59. No clear winner. Both might be relevant. Neither might be.

The minimal system surfaces this. A full pipeline tends to return top-k results regardless and hope the language model sorts it out. Sometimes it does. Often it produces confidently wrong answers from marginally relevant documents.

What Moved Back Into Full RAG

Building the minimal system clarified what the complexity in FSS-RAG is actually for.

Reranking exists because first-pass retrieval by cosine similarity isn't good enough when the corpus is large. The second pass — using a more precise model to score the shortlisted results — catches things the fast first pass misses. You need the fast pass to be fast, and the slow pass to be accurate.

Chunking strategies exist because documents aren't uniform. A contract has a different structure than a policy document, which has a different structure than an email thread. Treating them all with the same fixed-size chunking produces mediocre results for all of them.

Metadata filtering exists because semantic similarity ignores time, author, and document type — things that often matter as much as semantic relevance. You want results from the last six months, not the most semantically similar document from three years ago that's been superseded.

These aren't bureaucratic overhead. They're solutions to real failure modes that the minimal system surfaced clearly.

When to Use Which

FSS Mini RAG is the right tool for: - Testing a new embedding model against a corpus - Understanding retrieval behaviour on a new document set - Building a proof of concept quickly - Teaching someone how RAG actually works

FSS-RAG is the right tool for: - Production workloads on real document collections - Anything where answer accuracy matters - Systems that will be queried in ways you can't predict

The minimal version is a thinking tool. The full version is a production tool. They're not competing — the minimal one made the full one better.

The Actual Lesson

Building systems twice, at different levels of abstraction, is one of the most useful things you can do.

The first build gives you something that works. The second build — simpler, more direct, built with the knowledge of what the first one does — gives you understanding.

Understanding lets you debug the first one better. It lets you explain it. It lets you make decisions about it that aren't just cargo-culted from tutorials.

If you're building RAG systems and you haven't built a minimal version from scratch, I'd recommend it. Not to replace your full pipeline. To understand it.

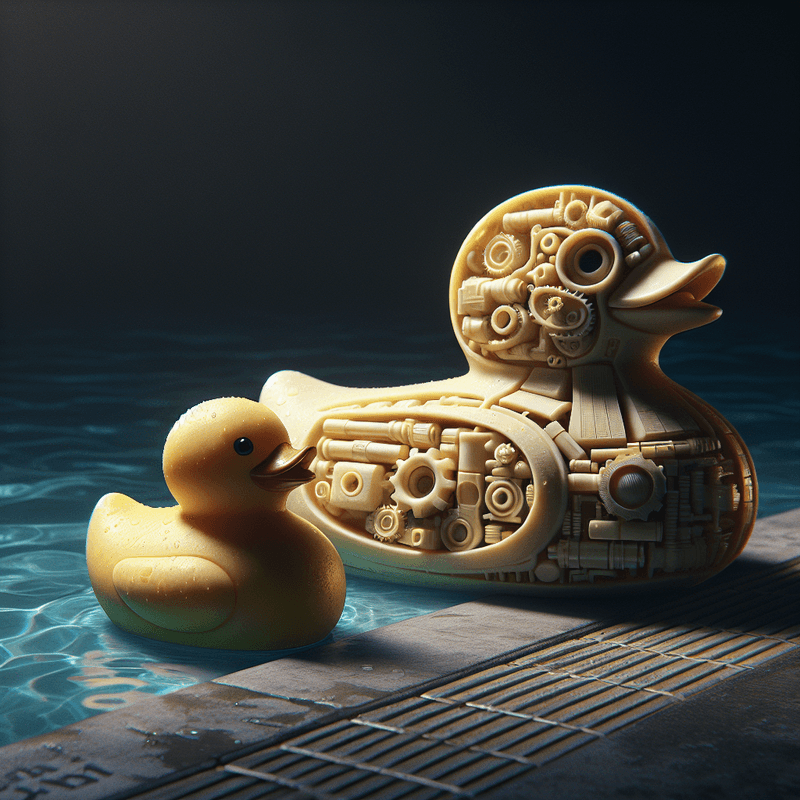

Part of the Duck Pool Experiments series — occasional build logs alongside the theory.