Building the Manifold Visualiser

I kept explaining semantic search to people and watching their eyes glaze over.

Not because they weren't interested. Because there was nothing to point at.

The Problem With Invisible Systems

Embedding models are everywhere now. They power search, recommendation systems, document retrieval, RAG pipelines. They're also completely invisible. You type a query, results appear. The mechanism — the thing that decided which results matter — leaves no trace on screen.

I'd built enough RAG systems to understand what was happening inside. But when I tried to explain it — this document scored 0.82 similarity, this one scored 0.71 — it meant nothing. Numbers without context.

What I wanted was to show the shape of how the model read a piece of text. Not cosine scores in a CSV. The actual text, with meaning visible on top of it.

What It Does

The Manifold Visualiser takes a piece of text and a query. It runs both through an embedding model, measures similarity at three levels — word, sentence, paragraph — and overlays the results directly on the text.

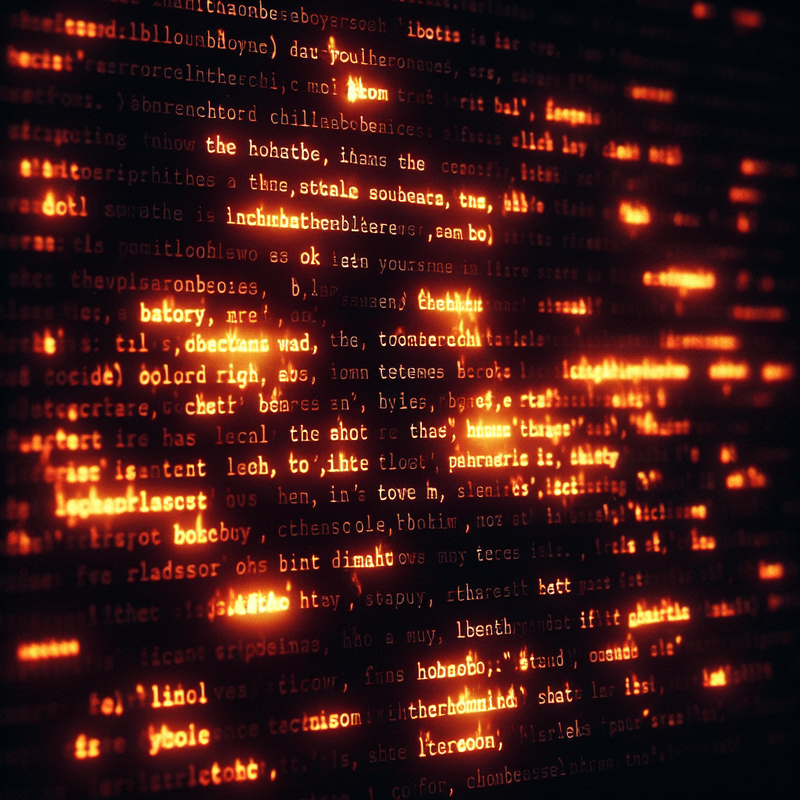

Words that are semantically close to your query glow. Sentences that match get underlined. Paragraphs that are relevant get a background halo.

You can see, at a glance, exactly which parts of a document the model considered relevant — and how strongly. It's the inside of a retrieval system made visible.

What I Got Wrong First

My first instinct was to show similarity scores as numbers next to each word. Percentages. Decimals.

It was useless. The scores didn't mean anything in isolation. Was 0.67 good? Compared to what?

The insight was that relative similarity matters, not absolute. You want to see that this sentence scored higher than those three sentences. The visual encoding — brightness, underline thickness, glow intensity — does that naturally. Your eye reads contrast. It doesn't read numbers.

I threw out the numbers and went fully visual. The tool got dramatically more useful immediately.

The Three-Level Problem

Similarity at word level and similarity at sentence level are different things.

A single word might match your query strongly but sit in a sentence that doesn't. The word "invoice" might glow brightly in a paragraph about invoicing history that's completely irrelevant to your specific question about payment terms.

Showing all three levels simultaneously — word, sentence, paragraph — lets you see when those levels agree and when they conflict. When they all agree, you've found genuinely relevant content. When they disagree, something interesting is happening.

That disagreement is often where the most useful information lives. A paragraph that's relevant at the paragraph level but has no strongly matching words is likely using different vocabulary to describe the same concept. That's exactly the kind of retrieval challenge that's hardest to debug otherwise.

Rate Limiting a Public Demo

The tool runs embedding models and, optionally, language model features. Those are not free to compute.

I rate-limited to one corpus load per fifteen minutes per user. No login required, no account, no friction — just a polite wait between experiments.

The LLM features (query rewriting, entity extraction, semantic splitting) are off for public users. The core experience — embed a document, run queries, see the heatmap — is fully available.

That turned out to be the right call. The core experience is the interesting part. The LLM features are power-user additions that would confuse more than they'd help for someone arriving cold.

What It Taught Me

Building something visual forced me to think clearly about what I actually understood.

I had assumptions about how similarity scores distribute across a typical document. They were mostly wrong. Real documents have much more variation than I expected — sharp spikes of relevance surrounded by near-zero background. The visual made that obvious immediately. The numbers never had.

It also showed me something about retrieval system design: the granularity of your chunks matters enormously. If you chunk a document by paragraph, you retrieve paragraphs. If a single sentence in that paragraph is relevant, you still pull the whole thing. The word-level heatmap shows exactly how much of a retrieved chunk is actually earning its place.

Try It

The Manifold Visualiser is live at /manifold/. Paste in any text — a document, a policy, an article — and run queries against it.

Watch which words light up. Watch which sentences the model reaches for. If you've ever wondered what semantic search actually does to your text, this is the clearest answer I know how to give.

Part of the Duck Pool Experiments series — occasional build logs alongside the theory.