Describing Ducks

I ask you to get me a duck. You jump in the pond. Every duck scatters.

Two Ways to Get a Duck

You're standing by the pond. I'm standing next to you. I want a specific duck.

Option 1: You jump into the pond, splash through the water, wade toward the general area, disturbing every duck along the way. By the time you get close, the duck I wanted has moved. Everything has shifted. You grab a duck, but probably not the one I meant.

Option 2: You're already in the pond, standing still, close to the duck I want. You reach down calmly, pick it up without disturbing the water. Got it. First try.

The difference? Whether you're starting from outside the system or already inside it.

The Language Problem

When I describe a duck using words, I'm compressing its location in the pond into a sequence of sounds.

"The yellow duck near the northwest corner."

That's not coordinates. That's a rough approximation, a compressed description, a lossy translation from position to language.

You hear those words. Your brain translates them back into an approximate location. You look for something that matches "yellow" and "northwest corner" and "duck."

But we just went from: 1. Exact position in space → 2. Compressed language description → 3. Your interpretation of that description → 4. Approximate position in space

At every step, precision is lost.

The Yellow Duck Problem

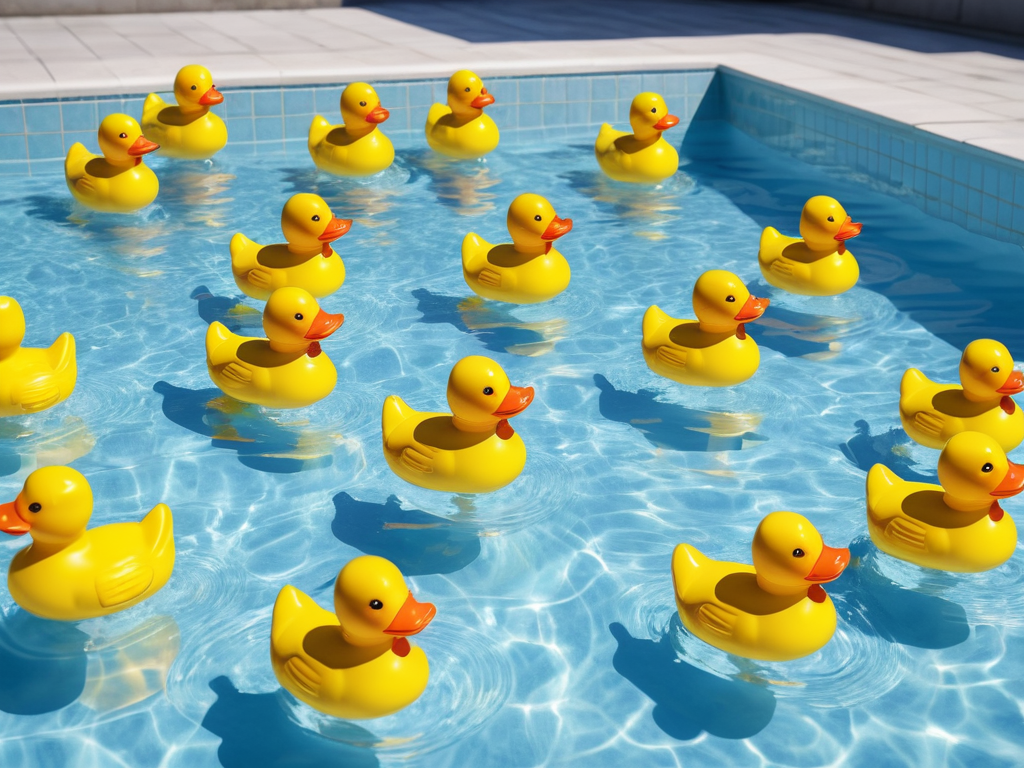

"Get me the yellow duck."

You look at the pond. There are twelve yellow ducks.

"Which one?"

"The yellow duck next to the other yellow duck."

Okay, now there are three candidates. Still ambiguous.

"The yellow duck on the left side."

From your position, that's four ducks. From my position, it was one obvious duck.

This is what happens when we use language to describe position in semantic space. The words are vague. They create a fuzzy region, not a precise point. "Yellow" eliminates some possibilities but leaves many others.

A Better System: Coordinates

"Get me the duck at coordinates: 3 meters from the north edge, 2 meters from the west edge."

Now you don't need to interpret. You don't need to guess what "yellow" means or which edge is "left." You have numbers. Measurements. Precise location.

You walk to the north edge, count 3 meters out, move 2 meters from the west edge, and there it is. One duck. No ambiguity.

The difference between "the yellow duck over there" and "3 meters north, 2 meters west" is the difference between vague language and precise coordinates.

The Translation Cost

When AI systems work with language, they're doing the same compression.

A concept exists in high-dimensional semantic space - a specific location with precise coordinates across hundreds of dimensions.

To communicate that concept, it gets compressed into tokens - discrete symbols, words, pieces of language. This is like saying "the yellow duck near the corner" instead of giving exact coordinates.

The listener (another AI, or a human) receives those tokens and decompresses them back into semantic space - reconstructing an approximate location based on the description.

But the reconstruction isn't exact. It can't be. You lost precision in the compression. "Yellow duck near the corner" could mean several different positions.

Jumping In vs. Being There

When you're standing outside the pond and I say "get the yellow duck," you have to translate my words into a position, then physically enter the pond, disrupting everything.

When you're already standing still in the pond, close to the ducks, you can reach without splashing, without scattering the other ducks, without disturbing the system.

AI systems that are "in the semantic space" - that have learned the structure, that understand where concepts are positioned relative to each other - can navigate with less disruption. They're already there. They don't need to jump in from outside.

But they still have the translation problem. They still need to convert language (tokens) into semantic coordinates and back again.

Why Vague Descriptions Fail

"It's a duck. It's in the pond. It's kind of yellowish."

From outside the pond, with no other context, you could be pointing at dozens of ducks. The description is too compressed. Too much information was lost in the translation to language.

"It's 4 meters from the northern edge, 1.5 meters from the eastern edge, it's yellow, it's facing southwest."

Now you have enough information to reconstruct the position. Still not perfect - "4 meters" isn't infinitely precise - but close enough to identify one specific duck.

The more precise the description, the less ambiguity in the translation.

The Tradeoff

Precise coordinates are accurate but awkward. Nobody talks like that. "Hey, can you pass me the object at coordinates X: 0.4m, Y: 0.2m, Z: 0.8m relative to my position?"

Vague language is natural but lossy. "Hey, can you pass me that thing over there?"

We accept the ambiguity because language is efficient for communication. But we pay for that efficiency with precision.

AI systems face the same tradeoff. Tokens (language) are how we communicate. But tokens are compressed representations of semantic coordinates. The compression is lossy. Precision is sacrificed for expressibility.

The Takeaway

Describing a duck's position in language is fundamentally different from specifying its coordinates.

Language is compressed, interpreted, approximate, and perspective-dependent. Coordinates are precise, objective, and unambiguous.

When we use words to point at concepts, we're compressing high-dimensional semantic positions into low-bandwidth language. Information is lost. Ambiguity is introduced.

The better the description, the less ambiguity. But you'll never get perfect precision through language alone.

Sometimes you need coordinates. Sometimes you need to already be in the pond.

Part of the Duck Pond Experiments series - making abstract concepts concrete.